0 Results for ""

The Vertical Moment Has Arrived in Generative Media: Will it Have Longevity?

.jpg)

If you're building in the AI space right now, you've probably felt the horizontal siren song: broader ICP, bigger market, millions more users who might fit your profile. It's a compelling pull.

But in a world where the application layer is increasingly dominated by model providers and hyperscalers building horizontal, generalist tools, a different pattern is emerging. To secure their foothold amidst users’ toolstacks and budgets, more companies are going narrower. This means a specific output type, opinionated choices about model orchestration, and deep integration into the real-world systems their customers already depend on. In particular, I’m seeing this pattern crop up in the generative media space (though I believe this pattern is persistent across the app layer more generally).

However, when it comes to verticalization, the question has always been (whatever you’re selling): how narrow is too narrow?

We've Seen This Arc Before

To see where generative media might shake out, we don’t have to look far—voice AI got there first. The pattern was familiar: foundation models improved, infrastructure providers proliferated (Livekit, Vapi, Pipecat, ElevenLabs) and then value began accruing at the application layer, in two distinct patterns.

Horizontal tools like Wispr Flow* built for broad accessibility and a wide variety of use cases. They win on accuracy and personalization, capturing mind share as people learn that voice AI can be integrated into every aspect of their daily lives, sending Slack messages, writing code, and working faster on the go.

Rather than compete directly with the first movers, vertical tools went deep on specific use cases: Abridge for healthcare documentation, Avoca for HVAC call answering, Prepared for emergency call triage. Why did vertical work? Because they can harness the learnings from the horizontal players, particularly on the UI and UX side, and then go deep on a specific workflow that generalist tools weren’t built to prioritize.

Generative media is now at the same inflection point. fal* alone hosts hundreds of generative media models, and their recent State of Generative Media Report found that major releases arrived every 4-6 weeks in 2025. This is evidence the model & infrastructure layer is growing fast, and it’s only accelerating.

But talking to founders and builders in the generative media space, one key difference stands out: the output types vary far more than in voice AI.

An image for an ad campaign, a video for social, a 3D render going into automotive production, a world simulation for robotics training — they're fundamentally different artifacts with different requirements, different downstream systems, and vastly different consequences when something goes wrong.

What Vertical Actually Means for Generative Media

When I say vertical in generative media, I don't mean industry in the traditional sense.

Take construction as an example. A rendering for a client pitch and permit drawings are both architect deliverables, but these artifacts are fundamentally different (and so are the consequences of getting them wrong). While a flawed rendering can be revised in an hour, a flawed permit set rejected by the building department can take months to resubmit and receive final approval.

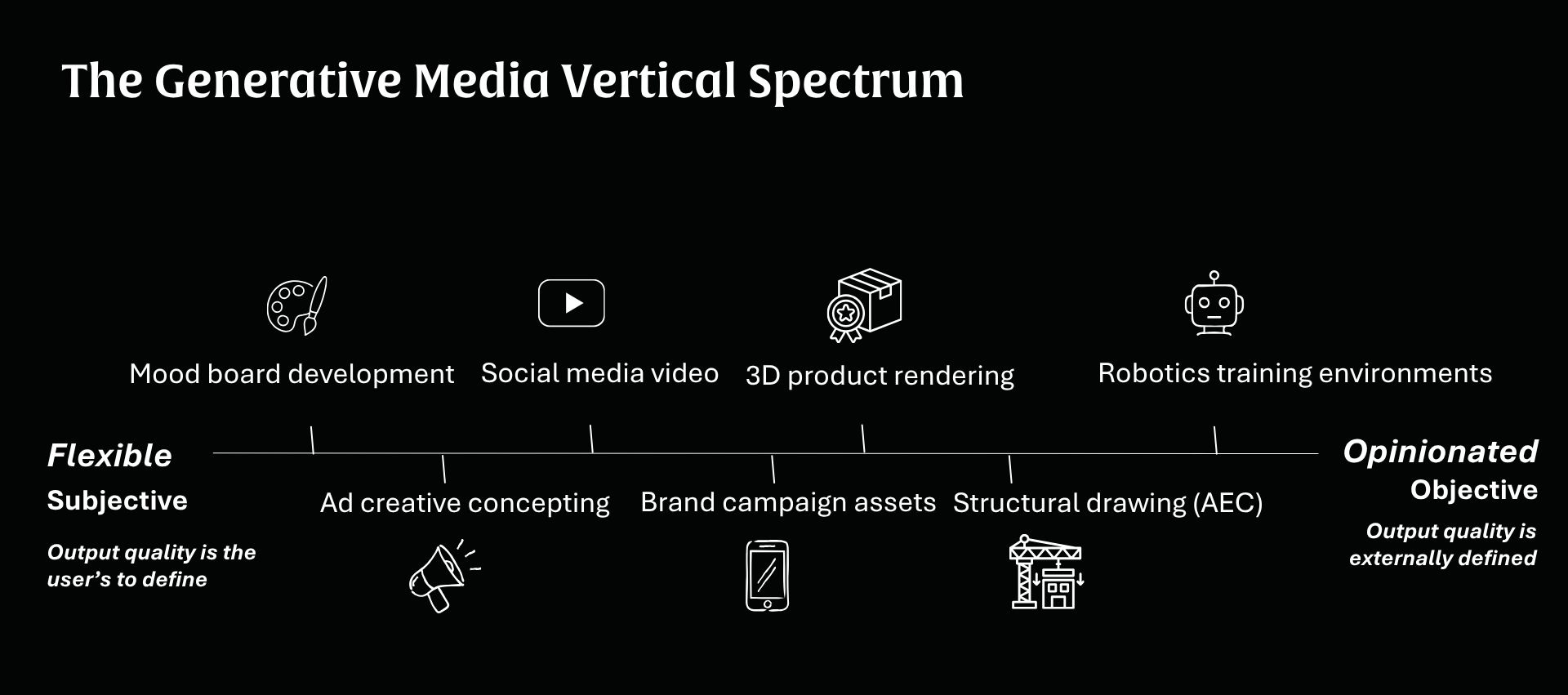

Different output types carry different requirements, which is why I argue a useful definition for verticalization in generative media is output type, not industry.

This distinction is a helpful framework to assess which companies will win in this next phase of innovation in the category.

The most instructive comparison I've seen is Flora versus Vizcom. They both build upon the model infrastructure that fal hosts, but what those companies do with that infrastructure is quite different.

Flora is a powerful horizontal tool for creative professionals. It gives users access to hundreds of models, lets them choose which model to apply to which task, and preserves the creative control designers expect from tools like Adobe. That's exactly right for their ICP: sophisticated creative professionals who know what they want and place deep value in maintaining control over the output.

Vizcom takes the opposite approach. Built for industrial and product designers (people designing shoes, furniture, automotive parts), it generates high-fidelity 3D renders, but you won't find a model selector anywhere in the product. You won't even see the word "AI" on the website. Vizcom makes all orchestration decisions for users, sequencing multiple models to produce outputs that meet manufacturing-grade standards.

That’s a deliberate bet that their users don’t want to choose between generative AI models, they just want to get from sketch to production-ready specification as fast as possible. And because Vizcom is solely focused on that one output type, they've become experts in which chain of models produces the highest-fidelity result. That expertise compounds over time.

Specialized tools also compound their advantage when there’s complex, multi-disciplinary coordination, like engineering. Buildings require a dizzying array of experts like structural, civil, mechanical, electrical engineers, landscape architects and architects, all closely collaborating through BIM tools such as Revit and AutoCAD (with decades of accumulated domain knowledge baked in). A horizontal AI tool can generate a building drawing. What it cannot do is flag when a generated design violates a building code constraint that a human expert would immediately recognize.

The Consequences Framework for Verticalization

Is “architecture” or “industrial designers” too narrow a pond to fish from? I think the answer is nuanced and the best historical comp to consider here is Veeva.

Pharma companies used Salesforce as their CRM and it worked, to a point. But the regulatory and compliance requirements of drug development were specific enough (and the consequences of getting them wrong severe enough) that Veeva built a $30B+ company by going narrow. The market that looked constrained turned out to be enormous — because when the stakes are high enough, customers consolidate around the tool that ensures they get it right.

That's the lens I'm applying to generative media: the consequences of the output.

A brand's social video is low-stakes. If the output is slightly off, the cost is minimal—a social media manager iterates and moves on. But a flawed product design going into manufacturing is a different category entirely. Catching a mistake at the render stage costs almost nothing. Catching it in production can cost millions. The same logic applies to a building design with a structural flaw, or a supply chain integration built on the wrong material spec.

Use cases with real-world, production-bound outputs carry stakes that horizontal tools aren't always built to handle. And that changes the economics: higher willingness to pay, stronger retention.

When both the cost of a wrong output is meaningful, and the domain knowledge required to get it right creates a moat that a generalist tool can't easily replicate, narrower markets produce very large outcomes, even while the horizontal players continue to thrive.

We're early enough that the right unit of specialization isn't fully clear. Where does "specific enough to be differentiated" tip into "narrow enough to be limiting"? That line is still being drawn. But history keeps proving that narrow, in the right place, turns out to be plenty to build an enormous business.